Using Containers for Microservices: Benefits and Challenges for your Organization

Using containers for microservices has gained a lot of popularity in the last decade or so. Developing the application through microservices across multiple containers results in the best of both worlds. It provides resilience as well as agility through scaling and improvements. Before we dwell on how containers and microservices form an ideal combination, let’s start with a basic understanding of microservices and containers.

Morgan Perry

April 25, 2022 · 6 min read

Microservices is an architectural paradigm for developing software systems that focuses on building single-function modules with well-defined interfaces and operations.

Containerization is the packaging of software code with all its necessary components like libraries, runtime, frameworks, and other dependencies to be isolated in their own "container”.

In this article, we will discuss the benefits of using containers with microservices and some challenges faced by using this combination.

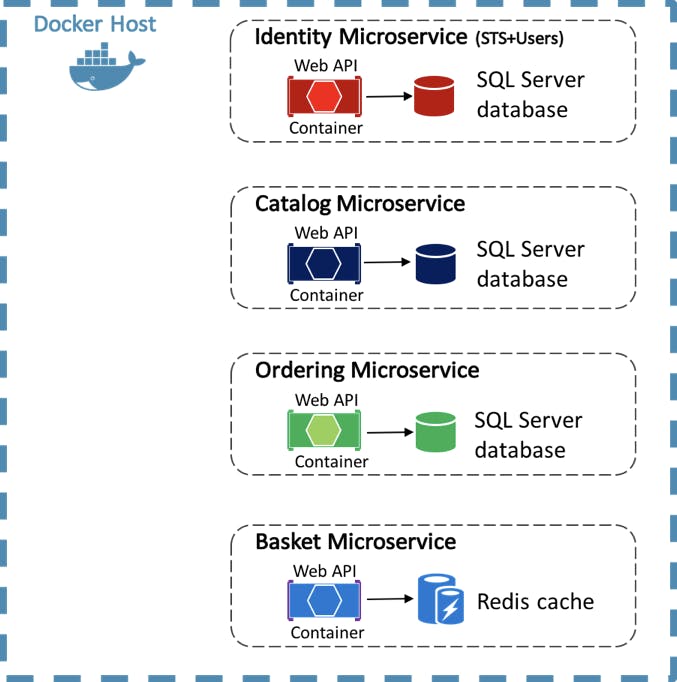

The diagram below illustrates an example of how microservices look when deployed on containers.

#Various options for deploying microservices

When it comes to hosting model for your microservices' computing resources, containers are not the only option. Here are some other options which can be used to host the microservices besides containers:

- Using virtual machines (VM). It's generally not recommended to host microservices inside VMs. You cannot deploy to a single VM otherwise, it will be a single point of failure. After deploying to multiple VMs you will need to connect them together. VMs are a better option for deploying a monolith application instead of microservices.

- Serverless functions. These provide isolated environments that run code preconfigured to respond to triggers such as a user login request.

#Why containers are the best option for microservices

We all know that containers are much faster and more lightweight than VMs. But that’s not the only reason containers are best suited for microservices. Let’s go through some vital benefits we get by using containers for microservices.

#Better performance and low infrastructure footprint

Virtual machines might take up a few minutes to start, but containers being lightweight, usually start in a couple of seconds. It's easier to take advantage of the agility of microservices when they are deployed in containers instead of VMs.

#Better Security

Containers provide better isolation for each containerized microservice. A microservice has a smaller attack surface and is isolated from other microservices in its own container. This ensures that a security vulnerability found in one container does not penetrate the other container. Whereas microservices deployed directly on a host OS or virtual machines are less secure as compared to containers.

#Ease for developers

Containerized microservices make developers’ life easier. As each microservice is a relatively small, self-contained component, it allows developers to work on their own specific tasks without needing to get involved in the complexity of the overall application. Also, containerized applications give them the freedom to develop each service in the language that suits the needs of that service.

#Service discovery

Service discovery is a crucial aspect of any SOA-based architecture. If your microservices are hosted in containers, it is easier for the microservices to be localized and intercommunication. If you deploy microservices in virtual machines, each host may have a different networking configuration. This makes it difficult to design a network architecture that can support reliable service discovery.

#Easier Orchestration

It is easier to orchestrate, schedule, start, stop and restart microservices when they run in containers on a shared platform.

#More Tools

The tools that support microservices with containers have significantly matured over the last few years. Orchestration platforms for containers, such as Amazon ECS and Amazon EKS (Kubernetes), have gained a lot of popularity and have good community support today. On the other hand, there are few tools to orchestrate microservices hosted in unikernels or VMs.

#What are the challenges of using containers for microservices?

While there are many benefits of using containers for microservices, still, there are some challenges. Find below a summary of some of those challenges:

#Increased complexity for your workforce

As containers add a layer of abstraction, this adds complexity in management, monitoring and debugging, etc. Many developers struggle to deal with the abstract nature of containers, especially when implementing microservices in an extensive application.

Containers are based on dynamic infrastructure, which means they are continuously booting up and closing down based on the load. This can potentially make deployment, management, and monitoring more challenging.

If microservices are written in different languages, that will also add an additional layer of complexity.

#Needs learning curve

Developers must be familiar with dockers, Kubernetes, or a similar tool to ensure container orchestration. Your servers must also be attuned to different container runtimes and the network and storage resources required for each. One of the ways to reduce this learning curve is the utilization of managed container services like AWS ECS and AWS EKS.

#Needs Persistent data storage

As containers are disposable, ephemeral environments, you will need a method of writing data to persistent storage outside of your containers.

#What factors to consider when using containers for microservices

It is essential to plan and evaluate the important factors in successfully containerizing your microservices. Here are some of those factors:

#Container runtime

A key consideration of containerized microservices is your container runtime. Containerized microservices are easier to manage when accompanied by a complete set of configuration management tools instead of simply deploying container runtimes on their own. Common examples of container runtimes include runC, Docker, Windows Containers, etc. Regardless of which container runtime you use, make sure it conforms to the specifications dictated by the Open Container Initiative (OCI).

#Plan for External Storage

As container data typically disappears once the instance shuts down, your application must implement an external storage mechanism. Many of the orchestration tools will come with data storage solutions. When comparing data storage solutions, review the features and attributes of each tool to choose the best orchestration tool for your organization’s needs. AWS ECS supports many types of persistent volumes, including EFS, FSX for windows, Docker volumes etc.

#Service orchestration

When working with a large number of containers, it's crucial to use orchestration tools that automate operational tasks e.g. load balancing among containers, shared storage, etc. While Kubernetes is the de facto go-to container orchestrator, especially if your application uses Dockers as the container runtime, container management platforms are also designed for specialized cases. AWS ECS and EKS are equipped with enterprise-level features like large-scale workflow automation and integrated CI/CD pipelines.

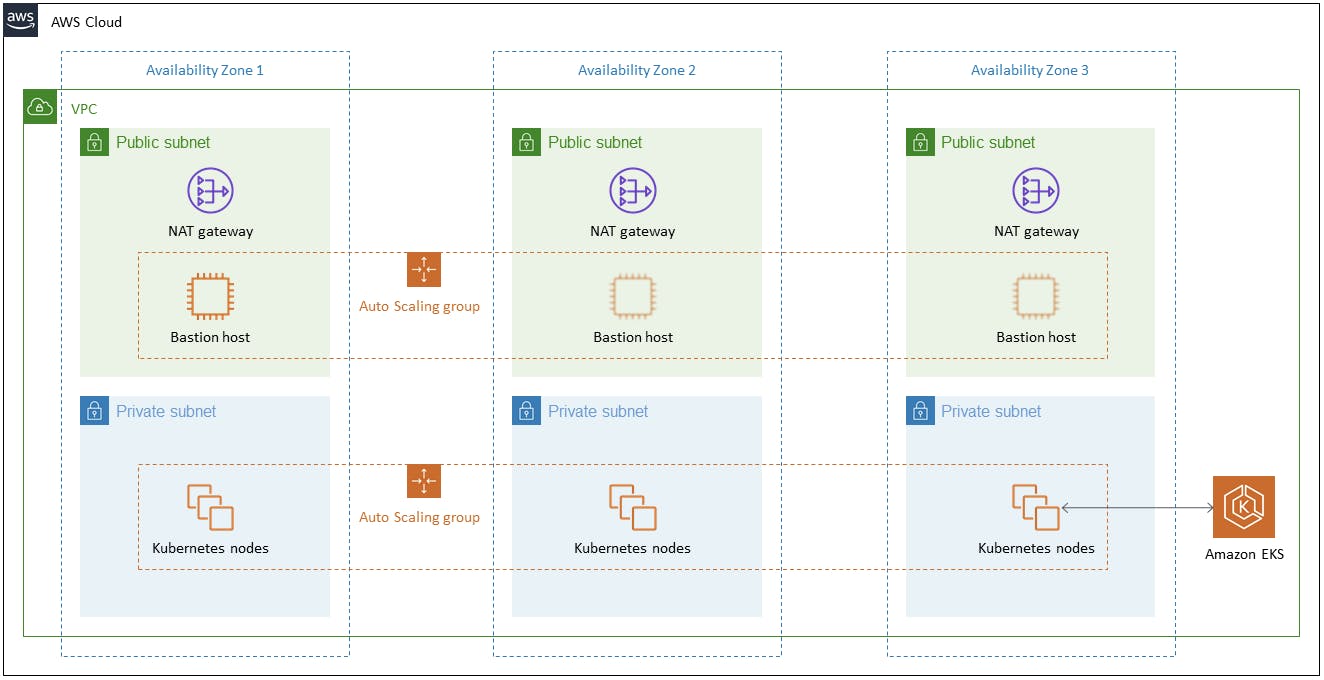

Below is an example diagram of AWS EKS for container orchestration.

#Prioritize Networking and Communication

While deployed independently in their own containers, microservices will still need to communicate with each other. The application design should consider networking and communication issues that may arise. Some of the services/tools which are used in this regard include AWS load balancers (ALB and NLB), AWS API gateway, VPC, etc.

#Security

Generally speaking, containerized microservices are more secure than VM-based monolith applications because they expose less attack surface. However, microservices often require access to back-end resources. Running containers in a privileged mode permits them direct access to the host's root capabilities, which could expose the kernel and other sensitive system components. Besides applying solid IAM practices, security groups, good network policies, and security context definitions, it is equally important to implement audit tools that verify if container configurations meet security requirements and container image scanners that automatically detect potential security exposures.

#Conclusion

In this article, we have discussed the pros and cons of using containers for microservices. Microservices are excellent options for growing organizations and containers are the best choice for hosting the microservices. We have also discussed challenges faced with containerized microservices. The challenges mentioned above are faced by every company implementing microservices for a complex and multi-component system. A modern solution like Qovery helps you achieve the same benefits promised by containerizing microservices without facing the complexities mentioned earlier. Discover Qovery today!

Your Favorite Internal Developer Platform

Qovery is an Internal Developer Platform Helping 50.000+ Developers and Platform Engineers To Ship Faster.

Try it out now!

Your Favorite Internal Developer Platform

Qovery is an Internal Developer Platform Helping 50.000+ Developers and Platform Engineers To Ship Faster.

Try it out now!

.jpg?ixlib=gatsbyFP&auto=compress%2Cformat&fit=max)

.jpg?ixlib=gatsbyFP&auto=compress%2Cformat&fit=max)