Kubernetes architecture: building resilient, multi-tenant control planes

Key Points:

- SaaS Control Planes: Best practices for isolating tenant workloads within a shared architecture.

- Toil Reduction: How component-level visibility prevents "cascading failures" in production.

- Intent-Based Scaling: Moving beyond standard HPA to architecture-aware autoscaling.

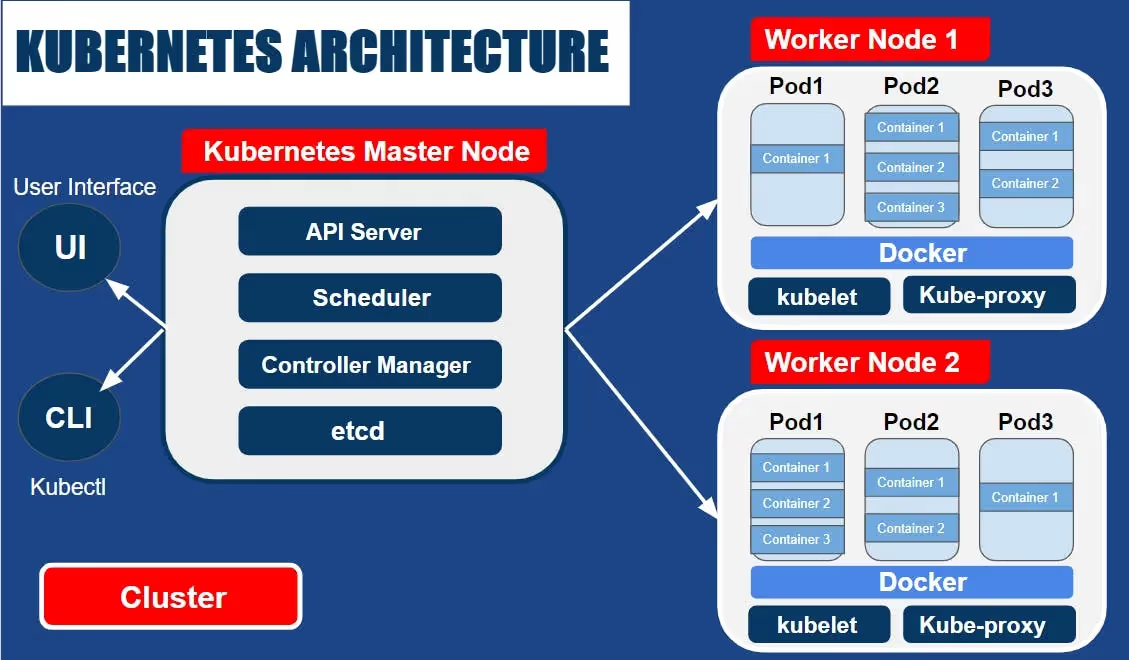

Kubernetes architecture splits into the Control Plane (management) and Data Plane (workloads). For SaaS providers, this architecture must be tuned for high availability and multi-tenancy.

Understanding the interplay between these components is critical to diagnosing bottlenecks that cause operational toil as user demand spikes.

Kubernetes Architecture Overview

Kubernetes promises unmatched scalability, reliability, and container orchestration for modern applications. But what actually happens beneath the surface when you hit 'deploy'?

For many developers and DevOps teams, Kubernetes (K8s) can feel like a powerful black box. This article provides a comprehensive overview of the core structure, detailing the roles of the master and worker components, and explaining the essential building blocks like Pods, Services, and Deployments

Master-Worker Architecture

Kubernetes follows a master-worker architecture (historically referred to as master-slave). Here’s a simple explanation:

- Control Plane (Master Node): The "brain" of Kubernetes. It makes global decisions about the cluster (like scheduling) and responds to cluster events.

- Worker Nodes: The machines where your applications run. Each worker node runs a Kubelet (the agent), a Container Runtime (like Docker or containerd), and a Kube-proxy (networking).

Core Components

Here are the main components of Kubernetes:

- Pods: The smallest and simplest unit in the Kubernetes object model. A Pod represents a running process on your cluster and can contain one or more containers.

- Services: An abstraction that defines a logical set of Pods and a policy by which to access them—crucial for stable micro-service communication.

- Volumes: Essentially a directory accessible to all containers running in a pod, used to store data and maintain application state.

- Namespaces: A way to divide cluster resources between multiple users or tenants. They provide a scope for names and are the foundation of SaaS multi-tenancy.

- Deployments: A controller that provides declarative updates for Pods and ReplicaSets. You describe a desired state, and the controller ensures the actual state matches it.

Master Components: The Brain of the Fleet

In Kubernetes, the master components make global decisions about the cluster, and they detect and respond to cluster events. Let’s discuss each of these components in detail.

1. API Server

The API Server is the front end of the Kubernetes control plane. It exposes the Kubernetes API, which is used by external users to perform operations on the cluster. The API Server processes REST operations validates them, and updates the corresponding objects in etcd.

2. etcd

etcd is a consistent and highly-available key value store used as Kubernetes’ backing store for all cluster data. It’s a database that stores the configuration information of the Kubernetes cluster, representing the state of the cluster at any given point of time. If any part of the cluster changes, etcd gets updated with the new state.

3. Scheduler

The Scheduler is a component of the Kubernetes master that is responsible for selecting the best node for the pod to run on. When a pod is created, the scheduler decides which node to run it on based on resource availability, constraints, affinity and anti-affinity specifications, data locality, inter-workload interference, and deadlines.

4. Controller Manager

The Controller Manager is a daemon that embeds the core control loops shipped with Kubernetes. In other words, it regulates the state of the cluster and performs routine tasks to maintain the desired state. For example, if a pod goes down, the Controller Manager will notice this and start a new pod to maintain the desired number of pods.

Here is an example output of command kubectl get componentstatuses. This command checks the health of the core components including the scheduler, controller-manager, and etcd server. This command is deprecated in newer versions of Kubernetes.

1NAME STATUS MESSAGE ERROR

2scheduler Healthy ok

3controller-manager Healthy ok

4etcd-0 Healthy {"health":"true"}*Technical Note: While this command provides a quick historical snapshot, it is deprecated in newer versions of Kubernetes. For enterprise fleets, we recommend monitoring the /livez and /readyz endpoints for more reliable control plane health data.

Node Components: The Data Plane

Worker nodes host the actual application workloads. To do this, they rely on three essential components:

1. Kubelet

Kubelet is the primary "node agent" that runs on each node. Its main job is to ensure that containers are running in a Pod. It watches for instructions from the Kubernetes Control Plane (the master components) and ensures the containers described in those instructions are running and healthy.

The Kubelet takes a set of PodSpecs (which are YAML or JSON files describing a pod) and ensures that the containers described in those PodSpecs are running and healthy.

2. Kube-proxy

Kube-proxy is a network proxy that runs on each node in the cluster, implementing part of the Kubernetes Service concept. It maintains network rules that allow network communication to your Pods from network sessions inside or outside of your cluster.

Kube-proxy ensures that the networking environment (routing and forwarding) is predictable and accessible, but isolated where necessary.

3. Container Runtime

Container runtime is the software responsible for running containers. Kubernetes supports several container runtimes, including Docker, containerd, CRI-O, and any implementation of the Kubernetes CRI (Container Runtime Interface).

Each runtime offers different features, but all must be able to run containers according to a specification provided by Kubernetes.

How Components Interact: Scheduling a Pod

- The Request: You submit a PodSpec to the API Server.

- The Decision: The Scheduler identifies a node with available resources.

- The Instruction: The API Server notifies the Kubelet on the target node.

- The Execution: The Kubelet instructs the Container Runtime to pull the image and start the container.

- The Networking: Kube-proxy updates the node's routing table to ensure the pod is reachable.

The 1,000-Cluster Reality: Why Definitions Change at Scale

For a small team, the architectural definitions above are enough to get an app running. But for Enterprise SaaS, running these components crosses the boundary from Day-1 deployment into the infinite loop of Day-2 operations.

At a scale of 10, 100, or 1,000+ clusters, managing Pods, Volumes, and Services manually via YAML files leads to massive configuration drift and operational toil. As we dive into the configurations below, consider how these standard building blocks must be managed via centralized, agentic automation to maintain fleet-wide stability.

Pods and Services

In a distributed SaaS architecture, managing individual containers is impossible. Kubernetes abstracts this complexity using Pods and Services.

Pods: The Atomic Unit

A Pod is the smallest deployable unit in Kubernetes. It represents a single instance of a running process in your cluster.

- Shared Context: Containers within a Pod share the same network namespace (IP and ports) and storage volumes.

- Ephemeral Nature: Pods are not intended to be "repaired." If a Pod fails, the Control Plane replaces it with a new one.

Sample configuration

Here’s a simple example of a Pod and Service definition in YAML. In this example, the Pod my-app is running a single container. The Service my-service exposes the application on port 80 and routes network traffic to the my-app Pod on port 9376.

# Pod definition

apiVersion: v1

kind: Pod

metadata:

name: my-app

spec:

containers:

- name: my-app

image: my-app:1.0

---

# Service definition

apiVersion: v1

kind: Service

metadata:

name: my-service

spec:

selector:

app: my-app

ports:

- protocol: TCP

port: 80

targetPort: 9376🚀 Real-world proof: how Hyperline eliminated the need for dedicated DevOps

By leveraging Qovery to manage multi-environment clusters, Hyperline removed the infrastructure bottleneck for their fintech platform.

⭐The Result: Eliminated the need for a dedicated DevOps hire, accelerated time-to-market, and significantly enhanced developer confidence. > Read the full Hyperline case study here.

Volumes and Persistent Storage

What are Volumes and Persistent Storage?

In Kubernetes, managing storage is a distinct concept from managing compute instances. Let’s dive into it.

- A Volume in Kubernetes is a directory, possibly with some data in it, which is accessible to the containers in a pod. It’s a way for pods to store and share data. A volume’s lifecycle is tied to the pod that encloses it.

- A Persistent Volume (PV) is a piece of storage in the cluster that has been provisioned by an administrator or dynamically provisioned using Storage Classes. It is a resource in the cluster just like a node is a cluster resource.

How Kubernetes Handles Storage and Data Persistence

Kubernetes uses Persistent Volume Claims (PVCs) to handle storage and data persistence. A PVC is a request for storage by a user. It is similar to a pod in that pods consume node resources and PVCs consume PV resources.

Example configuration snippet

Here’s an example of a PersistentVolume and PersistentVolumeClaim in YAML. The my-pvc claim will bind to the my-pv volume requesting 500Mi of its 1Gi capacity.

# PersistentVolume

apiVersion: v1

kind: PersistentVolume

metadata:

name: my-pv

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

storageClassName: slow

hostPath:

path: "/mnt/data"

---

# PersistentVolumeClaim

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-pvc

spec:

storageClassName: slow

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 500MiNetworking in Kubernetes

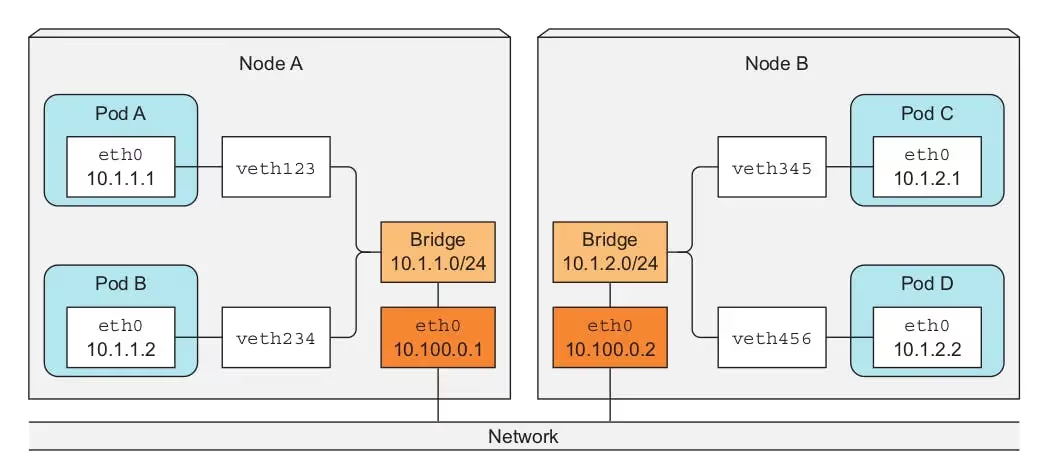

Networking is a central part of Kubernetes, but it can be challenging to understand exactly how it is expected to work.

Overview of Networking Concepts in Kubernetes

In Kubernetes, every pod has a unique IP address, and every node has its own IP address as well. This ensures clear communication and prevents overlap. Here are some key concepts:

- Pod-to-Pod networking: Each Pod is assigned a unique IP. All containers within a Pod share the network namespace, including the IP address and network ports.

- Service networking: A service in Kubernetes is an abstraction that defines a logical set of Pods and a policy by which to access them. Services enable loose coupling between dependent Pods.

- Ingress networking: Ingress exposes HTTP and HTTPS routes from outside the cluster to services within the cluster.

Network Policies and Ingress

Network Policies in Kubernetes provide a way of managing connections to pods based on labels and ports. It’s like a firewall for your pod, defining who can or cannot connect to it. Ingress, on the other hand, manages external access to the services in a cluster, typically HTTP. Ingress can provide load balancing, SSL termination, and name-based virtual hosting.

Workloads

In Kubernetes, a workload is an application or a part of an application running on Kubernetes. Let’s discuss different types of workloads.

- Deployments: A Deployment provides declarative updates for Pods and ReplicaSets. You describe the desired state in a Deployment, and the Deployment controller changes the actual state to the desired state.

- StatefulSets: StatefulSets are used for workloads that need stable network identifiers, stable persistent storage, and graceful deployment and scaling.

- DaemonSets: A DaemonSet ensures that all (or some) nodes run a copy of a pod. As nodes are added to the cluster, pods are added to them. As nodes are removed from the cluster, those pods are garbage collected.

- Jobs: A Job creates one or more pods and ensures that a specified number of them successfully terminate. It’s perfect for tasks that eventually completed.

Example configuration

Here’s an example of a Deployment named nginx-deployment, which spins up 3 replicas of nginx pods.

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 3

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80ConfigMaps and Secrets

Managing Application Configurations and Sensitive Information

In Kubernetes, ConfigMaps and Secrets are two key components that allow us to manage application configurations and sensitive information.

- ConfigMaps: These are used to store non-confidential data in key-value pairs. ConfigMaps allow you to decouple configuration artifacts from image content to keep containerized applications portable.

- Secrets: These are similar to ConfigMaps, but are used to store sensitive information like passwords, OAuth tokens, and ssh keys. Storing confidential information in a Secret is safer and more flexible than putting it verbatim in a Pod definition or in a container image.

Example configuration snippet

Here’s how you can create a ConfigMap and a Secret using literal values via the command line:

# Create a ConfigMap from literal values

kubectl create configmap example-config \

--from-literal=key1=value1 \

--from-literal=key2=value2

# Create a Secret from literal values

kubectl create secret generic example-secret \

--from-literal=username=admin \

--from-literal=password=secretFrom Architectural Basics to Agentic Fleet Control

Understanding Kubernetes architecture is just the baseline. While the Control Plane and Node components brilliantly orchestrate single-cluster deployments, scaling this architecture across dozens or hundreds of clusters introduces massive operational toil.

Stop managing individual YAML files and start managing intent. By adopting an agentic Kubernetes management platform like Qovery, platform engineering teams can eliminate configuration drift, automate Day-2 maintenance, and turn complex infrastructure into a strategic advantage.

FAQs

Question: How does Kubernetes architecture support multi-tenancy for SaaS?

Answer: K8s architecture supports multi-tenancy through Namespaces, RBAC, and Network Policies on the control plane, allowing multiple customers (tenants) to share hardware while remaining logically isolated.

Question: What is the difference between the K8s Control Plane and Data Plane?

Answer: The Control Plane makes global decisions about the cluster (like scheduling), while the Data Plane (nodes) actually runs the containerized applications.

Question: Why is architecture visibility important for SREs?

Answer: Deep architectural visibility allows SREs to identify if a failure is rooted in the control plane (e.g., etcd latency) or the data plane (e.g., node resource exhaustion), reducing time-to-resolution.

Suggested articles

.webp)

.svg)

.svg)

.svg)