Stop tool sprawl - Welcome to Terraform/OpenTofu support

Too Many Tools, Too Much Overhead

Provisioning cloud infrastructure and deploying applications have traditionally lived in separate silos. Teams use tools like Atlantis, Spacelift, or custom runners to manage Terraform or OpenTofu. Then, they turn to ArgoCD, Flux, or Qovery to deploy their applications.

The result?

Fragmented workflows, inconsistent deployment timing, fragile CI scripts, and a constant back-and-forth between tools just to get a working environment up and running.

If your infra isn’t ready, your app deployment fails. If your app needs outputs from Terraform, someone has to wire them together manually. It works, but it’s painful and hard to scale.

The Qovery Platform: One Environment, One Control Plane

Qovery was built to simplify the application lifecycle by unifying it inside your own Kubernetes cluster, on your infrastructure, with your security, under your control.

Now, with Terraform & OpenTofu native support, Qovery extends that same control to infrastructure provisioning. You can deploy everything from a single environment: no CI glue, no handoffs, no tool sprawl.

This feature isn’t a side add-on. It’s a natural extension of how Qovery environments work.

You can specify deployment order between infrastructure resources and applications, pass outputs as environment variables for workloads, and manage the full stack lifecycle directly in Qovery, all running securely inside your Kubernetes cluster.

Outcomes for Your Team

With Terraform & OpenTofu support, you’ll get:

- Fewer scripts: no more custom CI jobs to glue Terraform to app deployments

- Consistent deployments: define the full stack once, deploy it the same way every time

- Less waiting on DevOps: developers can self-serve infra with guardrails

- No tool sprawl: one platform to manage infra and apps together

A Realistic Example: From Three Tools to One Platform

One of our users was running Terraform through Atlantis, applications through ArgoCD, and using CI scripts to pass values between them. The process worked but was fragile and hard to scale. Any change required coordination across repos, tooling, and teams.

They moved to Qovery’s native Terraform support, defined their infrastructure and applications in the same environment, set the proper deployment order (RDS → seed job → backend), and removed dozens of lines of CI logic. Now, it’s all handled by Qovery: in one flow, with full visibility.

Read more: Cut Tool Sprawl: Automate Your Tech Stack with a Unified Platform

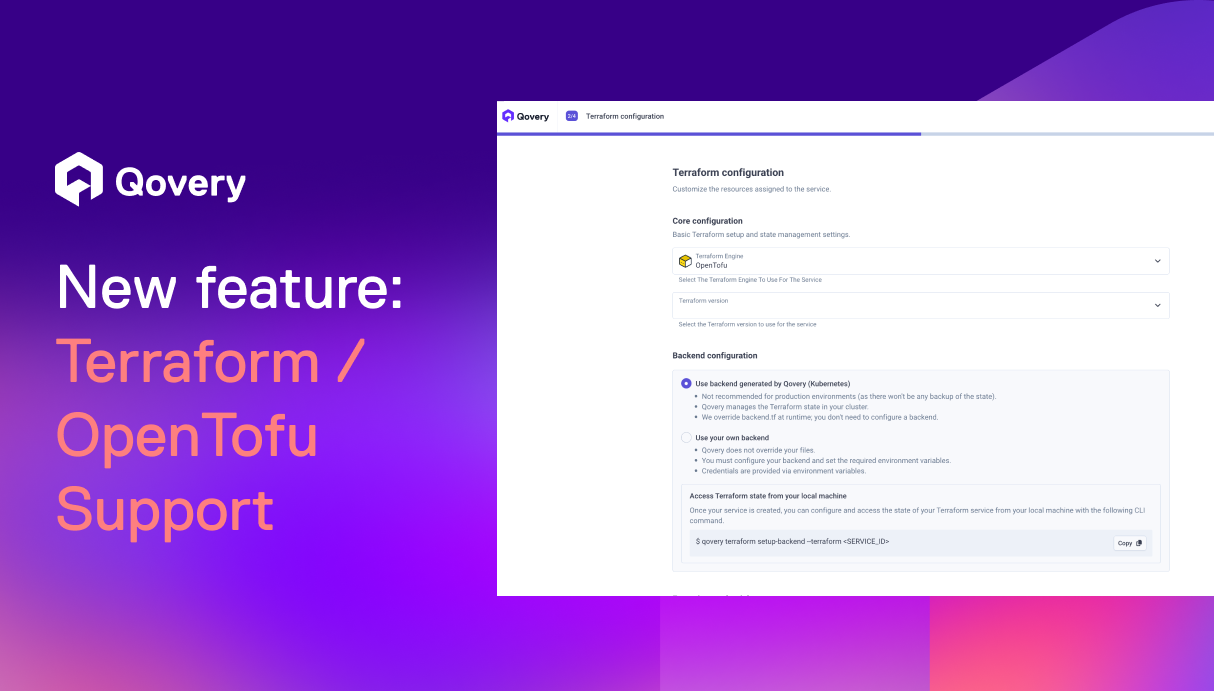

Deploy Your Manifest in 3 Simple Steps

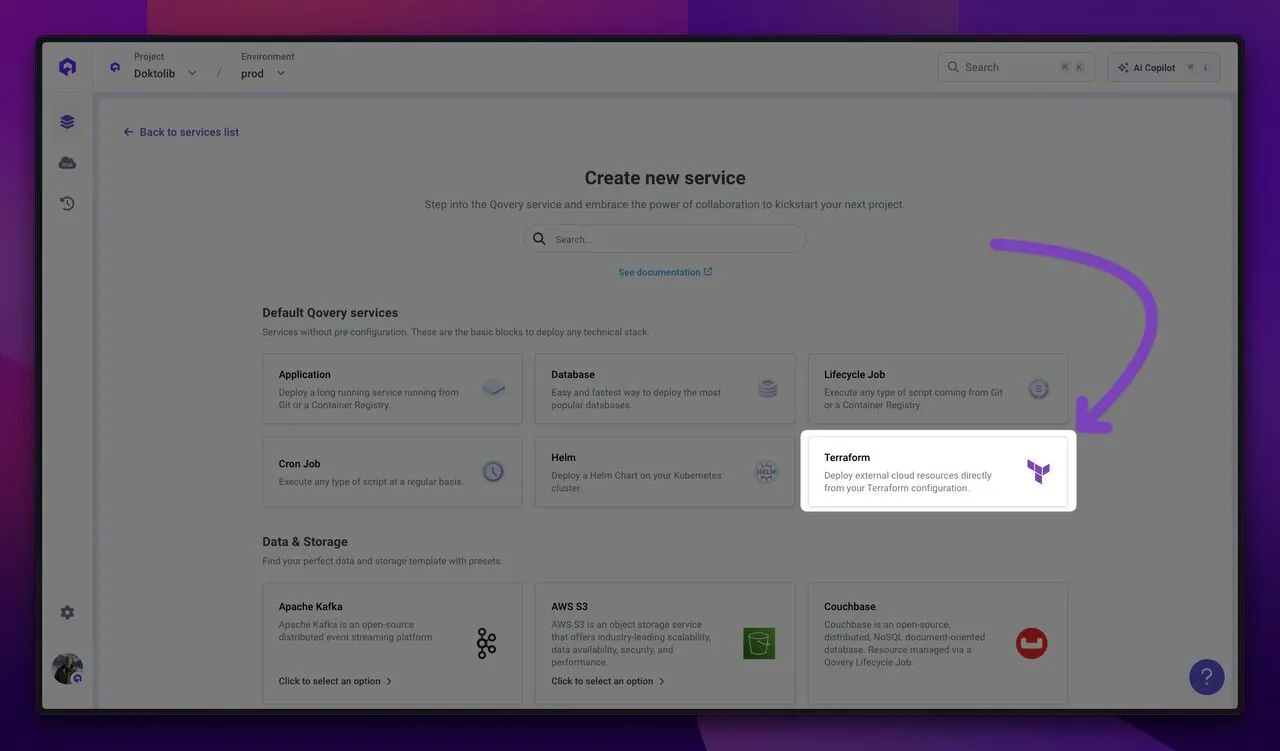

- Add a new service of type “Terraform” inside your existing Qovery environment

- Connect your Git repository containing the Terraform or OpenTofu manifest, set inputs automatically fetched by Qovery from your manifest, define the state location and define the deployment order

- Execute plan or apply: Qovery will manage the lifecycle, handle remote state, and inject outputs as environment variables for your other services to consume

Want to see it in action? Check the demo below:

Try It Today

Ready to simplify your infra and app deployments?

Try it out today by adding a new resource via Terraform directly on an existing environment.

Need help migrating from Atlantis or custom scripts? We’re here to help.

Suggested articles

.webp)

.svg)

.svg)

.svg)